Our funding comes from our readers, and we may earn a commission if you make a purchase through the links on our website.

Network Throughput – What is It, How To Measure & Optimize!

UPDATED: December 14, 2023

When people describe their web browsing (or general Internet) experience, they usually speak in terms of speed, saying something like, “My Internet connection is really slow!” However, this thing they call “speed” is as a result of many networking-related elements including bandwidth, throughput, latency, packet loss, and so on.

In this article, we will be looking at one of those factors that affect the speed on a network – Network Throughput.

Network Throughput

According to the Google dictionary, Throughput is defined as “the amount of material or items passing through a system or process.” Relating this to networking, the materials are referred to as “packets” while the system they are passing through is a particular “link”, physical or virtual.

Furthermore, when discussing network throughput, the measurement is typically taken per unit time, between two devices, and represented as Bits per second (bps), Kilobit per second (Kbps), Megabits per second (Mbps), Gigabit per second (Gbps), and so on.

So for example, if a packet with a size of 100 bytes takes 1 second to flow from Computer_A to Computer_B, we can say the throughput between the two devices is 800bps.

Note: 1 byte is equal to 8 bits. Therefore, 100 bytes is 800 bits, resulting in the throughput calculation of 800 bits per second.

Bandwidth vs. Throughput

Looking at the description above, one question that comes to mind is, “What is the difference between bandwidth and throughput?” To explain this difference, let’s use an analogy of water flowing through pipes.

Considering the two pipes shown above, which pipe do you think will pass more water through it? The default answer is PIPE B because that is a fatter pipe. However, the real answer to the question is that “it depends”.

If water is flowing at maximum capacity through both pipes, then PIPE B will carry more water through at a particular time. But what if much more water is coming in to PIPE A than PIPE B? Or, what if there is debris in PIPE B that is restricting the flow of water inside the pipe?

In summary, we can conclude that in ideal conditions and at maximum capacity, PIPE B will carry more water than PIPE A. However, any number of factors can cause more water to flow through PIPE A per unit time.

Using the analogy above, Bandwidth can be compared to the fatness of the pipes (i.e. the maximum and theoretical capacity of the pipe) while Throughput is the actual amount of water that flows through per unit time. Therefore, even though bandwidth will set a limit on throughput, throughput can be affected by a host of other factors.

Factors that Affect Throughput

Now that we have seen the difference between bandwidth and throughput, let us now take a detailed look into some of the factors that can affect the throughput on a network.

1. Transmission Medium Limitation

Like we said above, the bandwidth (or the theoretical capacity) of a particular transmission medium will limit the throughput over that medium. For example, a FastEthernet interface provides a theoretical data rate of 100 Mbps. Therefore, no matter how much traffic needs to be sent over that interface, they cannot go over the 100 Mbps data rate. In reality, the practical data rate over such an interface will be about 95% of the theoretical capacity.

2. Enforced Limitation

Let’s assume an organization wants to purchase a 3 Mbps link capacity from an ISP, through what medium will the ISP deliver this capacity to the organization? The likelihood, based on current technologies, is that the ISP will use a medium that can theoretically deliver more capacity than the 3 Mbps being requested (e.g. MetroEthernet on 100 Mbps interface).

As such, the ISP will use other features to enforce the 3 Mbps capacity on the link which will, in turn, affect the throughput on that link.

3. Network Congestion

The degree of congestion on a network will also affect throughput. For example, the experience of a single car on a 4-lane highway is much better than when there are 100 cars on the same highway. As a general rule, the more congested the network is, the less throughput will be available on that network (when viewed from the perspective of a single source-destination set).

4. Latency

Latency (or delay) is the time it takes for a packet to get from sender to destination. For some types of traffic, the higher the latency on the network, the lower the throughput. Let’s take TCP for example: before another stream of packets can be sent from source to destination, the previous stream must be acknowledged.

Therefore, if the acknowledgment is delayed, the average throughput measured over time will also be reduced. The throughput of other kinds of traffic such as UDP is not necessarily affected by latency.

5. Packet Loss and Errors

Similar to latency, the throughput of certain kinds of traffic can be affected by packet loss and errors. This is because bad/lost packets may need to be retransmitted, reducing the average throughput between the devices communicating. Both latency and packet loss can be affected by a host of factors including bottlenecks, security attacks, and damaged devices.

6. Protocol Operation

The protocol used to carry and deliver the packets over a link can also affect the throughput. Examples include the flow control and congestion avoidance features in TCP, which can impact when and how much data can be sent between two devices.

Measuring Throughput

You may decide to measure throughput for a variety of reasons including determining bottlenecks in a network, verifying service level agreements (SLAs), assessing network performance, and so on. For example, I was once called in to consult on a network where the organization was not feeling the effect of the 100 Mbps capacity they were purchasing from their ISP.

Upon investigation, we discovered that one of the interfaces on the edge router was faulty. We were able to make this discovery by measuring the throughput between devices connected to different ends (interfaces) of the router. This revealed a 70% drop in throughput, isolating the problem to that device.

There are several tools available to measure network throughput including SolarWinds Network Bandwidth Analyzer Pack, pingb, and iPerf.

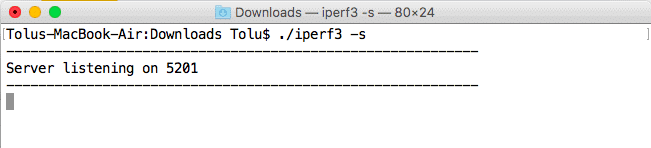

I particularly like iPerf based on experience (it was the tool that revealed the 70% throughput drop above) so let’s quickly see how this tool works. It requires a client and server to function. This just means you need two devices to measure throughput using iPerf – one running as a server and the other one running as a client making requests to the server.

Let’s start with the server. Once iPerf is downloaded (no install required; just run as an executable), open Command Prompt (or your Terminal), navigate to the location of the executable file, and type iperf3 -s to run in server mode. The default server port is 5201:

Note: On a Unix-based system (and MacOS), you need to run the file as an executable using “./” On the Windows OS, typing “iperf3” should be fine. Alternatively, you can specify the full filename as “iperf3.exe”.

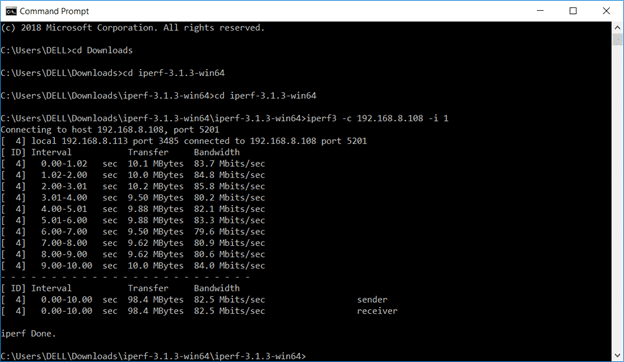

Once the server is running, you can make connections to it from a client using the command iperf3 -c <server ip address> along with any necessary options. For example, the screenshot below shows that the throughput between two devices on a network, one connected via Wi-Fi, and the other connected via a LAN cable, is 82.5 Mbps:

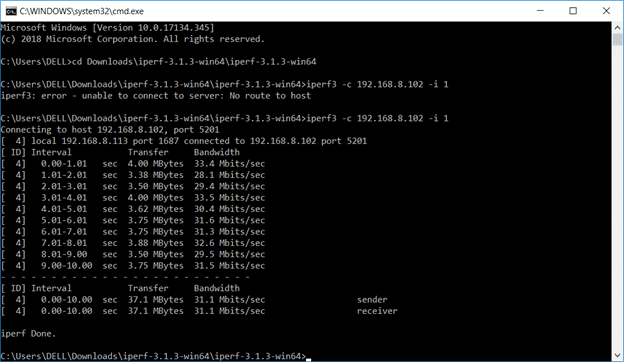

On the same network, the throughput between two devices connected wirelessly is 31.1 Mbps:

iPerf is fine when you are able to get two computers on both ends of the network that you want to take measurements on. However, what if you want to measure throughput between a computer and another device that cannot run iPerf, for example, a router?

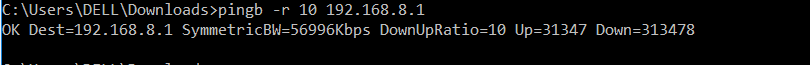

This is one of the limitations of iPerf. Of course, you can try to get a free interface on that router and connect a computer to that interface but this is not always feasible. In such cases, you are better off using alternatives such as pingb which only requires one device as long as the other device can respond to ping packets greater than 1500 bytes.

Optimizing Throughput

The throughput on a network can be improved once the cause of low/reduced throughput has been identified as listed under the “Factors that affect Throughput” section above:

- Bandwidth can be increased to provide more throughput especially in cases where the network is overloaded i.e. the bandwidth cannot support the load on the network.

- Bottlenecks should be identified and removed from the network. This will go a long way in reducing latency and packet loss/errors, and thereby reducing congestion on the network. Note that bottlenecks can be as a result of medium limitation e.g. using 100 Mbps interfaces instead of 10 Gbps interfaces.

- Faulty devices/components should be replaced and overburdened devices should be upgraded.

- Quality of Service (QoS) can be applied to ensure that critical traffic is unaffected by network congestion. While this will not improve overall throughput on the network, it will ensure good throughput for critical traffic.

What are the Benefits of Throughput in a Network?

Throughput plays a key role in determining a network's overall performance. Here are a few other benefits of Throughput in a Network, including:

- Increased Speed The throughput in a network measures the rate at which data may move via a network. A higher throughput implies that your data can travel from one location to another more quickly. Thus, a network with a high throughput helps in quick video downloading, streaming, etc. It even helps users play online games smoothly without buffering.

- Increase Reliability of Data Transmission Transfer of data is less likely to be delayed or interrupted over a network with high throughput. For operations requiring data integrity and consistency, like Internet banking or video conferencing, this reliability is crucial.

- Quality of Service (QoS) For networks to effectively manage QoS, throughput is crucial. It contributes to a better user experience by ensuring that key applications (such as VoIP and video conferencing) obtain the required bandwidth and are given priority over other data traffic.

- Help Optimize Network Efficiency With the help of throughput data, users can analyze and discover that require optimization. For smooth data flow, it is necessary to optimize different areas in a network, including hardware upgradation, route path adjustment, and load balancing implementation.

- Scalability High throughput networks may be scaled and modified to meet changing demands more easily. With no degradation in performance, they can handle an increase in data traffic as well as the addition of new users or devices.

- Better Security Throughput fluctuations that suddenly increase or decrease may be a sign of malicious behavior. By monitoring throughput in a network, you can detect security threats in real-time and reduce their chance of reoccurrence.

How to Increase Throughput in a Network?

To improve network performance, it is essential to increase your network's throughput, especially in settings where data demands are rising. Here are a few suggestions that will help assist you in boosting network throughput:

- Upgrade Network Hardware Components You can increase your network's efficiency and speed by upgrading hardware components of your network that support data transfer. This could entail upgrading routers and switches to versions with faster processing speeds and removing outdated network cables.

- Optimize Wireless Router Settings Make sure to check that your router uses the latest firmware and the most effective Wi-Fi channel that will help reduce interference from nearby networks. Also, to provide key devices or apps priority access to bandwidth, and enable Quality of Service (QoS) settings. By making these enhancements, the wireless network's speed and dependability can be greatly improved.

- Load Balancing Technologies Using load-balancing technologies, traffic is effectively distributed among a number of network resources. By ensuring that no single resource is overloaded with excessive data demands, these solutions avoid bottlenecks and maximize the use of the available network bandwidth. Depending on the server's health, proximity, and other factors, load balancers automatically direct incoming data packets to the most appropriate resource.

- Install a dedicated wireless connection Another way to increase your throughput in a network is to install a separate and exclusive wireless network that helps perform fast data transmissions. This will further help interference and congestion from other devices and networks.

- Disable Unnecessary Programs Running in the Background You might have certain unnecessary programs running in the background that consume a lot of your resources. As a result, the internet connection may slow down due to these background apps. By turning them off, you free up important network resources for important things like streaming, online gaming, etc.

Conclusion

This brings us to the end of this article where we have discussed Network Throughput. We have seen that while bandwidth is the theoretical maximum capacity of a link, throughput is the actual data transfer rate on that link per unit time.

We have discussed how bandwidth, latency, limitation of the transmission medium, and other factors can affect throughput. Finally, we discussed how throughput can be measured using tools like iPerf and pingb, and also how to optimize throughput in your Networks.